A/B Test

A/B Test is an experimental method for comparing the performance of two stimuli. This experimental method exposes respondents to each stimuli individually to allow for focussed comparison between the two stimuli. These stimuli may be anything from claims, to packaging, to two different products. A/B testing can be used for:

- Determining which ad is more effective at increasing brand awareness

- Finding which packaging will increase purchase intent

- Deciding between two claims

Use A/B Test to perform a focussed comparison between two items.

The survey flow compares two products on 4 questions.

Monadic Tests can be automatically translated to more than 30 languages.

Bring your own respondents or source real human respondents swiftly and at scale through us.

Main outputs of A/B tests

Table of outputs for each question:

Summary metrics for each question included in the monadic for each stimulus for easy comparison.

At-a-glance summary allowing for quick comparison between stimuli with the option of drilling down into detailed metrics of any question for any stimulus.

Detailed question output for a particular stimulus:

Displays detailed outputs for an in depth look into responses for a particular question.

With Conjointly, we provide detailed statistics into each question for each stimulus if you are interested in the nitty gritty details such as distribution of responses, medians, ranges.

Segmentation of the market

Find out how preferences differ between segments.

With Conjointly, you can split your reports into various segments using the information collected automatically by our system, respondents' answers to additional questions (for example, multiple choice), or GET variables. For each segment, we provide the same detailed analytics as described above.

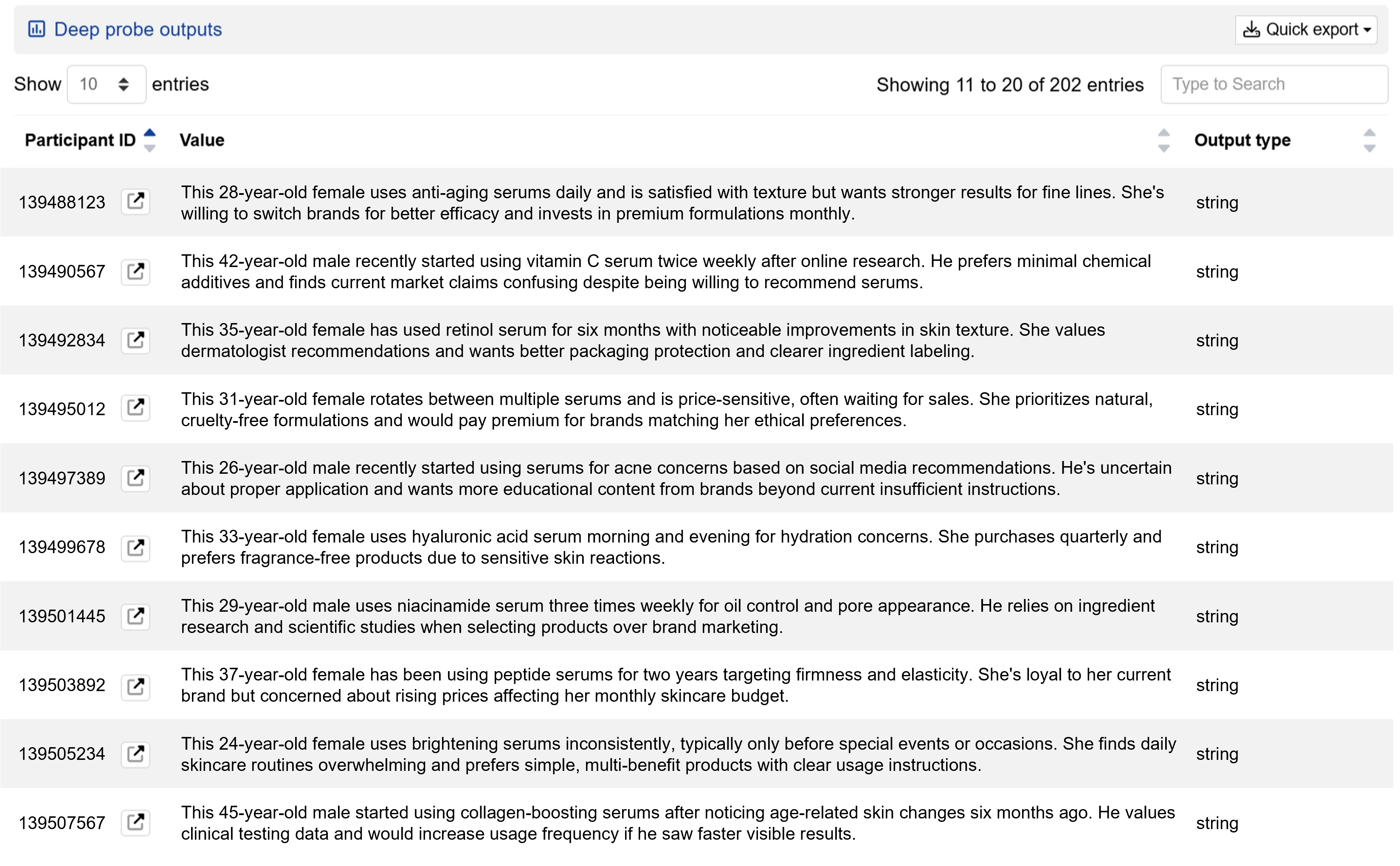

Deep probe analysis

Explore survey data further and get structured results in minutes.

Deep probe automatically analyses your survey data using LLM analysis. Whether it's identifying key themes from open-ended responses, analysing individual respondents, or extracting specific insights, simply describe your analysis request and receive structured outputs in minutes rather than days.